Over-used futurist quote

Ever heard that oft-repeated, tech quote, "I

think there is a world market for maybe five computers”? Oh how we laugh! How stupid could these old timers be! But I groan when I hear this quote or see it on a PowerPoint slide by some showboating presenter who describes him or

herself as a ‘futurist’. My most recent experience was at an OEB keynote in

Berlin. For me, it has become a touchstone. Whenever I see or

hear it, my bullshit alarm rings and I’m eyes down on my laptop doing something

else. I groan, first because

it’s an overused cliché, used by lazy speakers, second, because the quote is

a lie.

No evidence

Kevin Maney

tried but failed to find the quote in any contemporary document, speech or account

about Watson or IBM. It doesn’t appear anywhere until over 40 years after it was

reported to have been said by Watson in 1943, first in a book by Cerf and Navasky (1984). Unfortunately the quote seems to have been lifted from an

earlier book of Facts and Fallacies. It then pops up on a newspaper column (May

1985) and seems to have spread virally on the nascent internet from its first

mention on Usenet, in 1986.

The fact

that the quote was a myth was even discussed as early as 1985 and certainly in

the Economist in 1973, "revealing

that Watson never made his oft-quoted prediction that there was 'a world market

for maybe five computers.'" Of course, the absence of evidence does not

mean that it didn’t happen but the next time an overeager academic quotes this

on his or her slides, I’m going to call for a timeout and ask for a citation.

Watson’s no saint

Although we can absolve him from blame on the quote, Watson

did something far worse. He flew to meet Hitler in 1939 and sold him a

primitive punch-card Learning Management System. As told in in the excellent book IBM and the Holocaust by Edwin Black, it stored data on skills, race

and sexual orientation. Jews, Gypsies, the disabled and homosexuals, were identified and

selected for slave labour and death trains to the concentration camps. I once

mentioned this to Elliot Masie at a breakfast meeting in Florida. He went

apeshit, as it was a rather uncomfortable truth. (IBM was a sponsor of his

conference).

Einstein

Next up is Einstein. I once wrote a spoof blog about a course generator I had invented, that automatically generated schemas of words all beginning with 'C' and an Einstein quote generator. No look at the future is complete without an Einstein quote. Yet many he never said and some are just made up. Take "Everyone is a genius. But if you judge a fish by its ability to climb a tree, it will live its whole life believing that it is stupid." He never said it. "play is the best form of research"." He never said it. Or "The definition of insanity is doing the same thing over and over and expecting different results." He never said that either. There are literally dozens of these.

Not just quotes

Conclusion

Next up is Einstein. I once wrote a spoof blog about a course generator I had invented, that automatically generated schemas of words all beginning with 'C' and an Einstein quote generator. No look at the future is complete without an Einstein quote. Yet many he never said and some are just made up. Take "Everyone is a genius. But if you judge a fish by its ability to climb a tree, it will live its whole life believing that it is stupid." He never said it. "play is the best form of research"." He never said it. Or "The definition of insanity is doing the same thing over and over and expecting different results." He never said that either. There are literally dozens of these.

Not just quotes

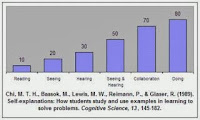

Ever seen

this graph, or one like it? It's still a staple in education, training and

teacher training courses. I've seen it this year in a Vice Chancellor talk at a

University and by the CDO of a major learning company. It’s bogus.

A quick

glance is enough to be suspicious. Any study that produces a series of results

bang on units of ten would seem highly suspicious to someone with the most

basic knowledge of statistics.

But it’s

worse than nonsense, the lead author of the cited study, Dr. Chi of the

University of Pittsburgh, a leading expert on ‘expertise’, when contacted

by Will Thalheimer, who uncovered the deception, said, "I don't recognize this graph at all. So the citation is definitely

wrong; since it's not my graph." What’s worse is that this image and

variations of the data have been circulating in thousands of PowerPoints,

articles and books since the 60s.

Further investigations

of these graphs by Kinnamon ((2002) in Personal communication found dozens of references to these numbers in reports and

promotional material. Michael Molenda ( (2003) Personal

communications, did a similar job. Their investigations

found that the percentages have even been modified to suit the presenter’s

needs.

The one

here is from Bersin (recently bought by Deloitte). Categories have even

been added to make a point (e.g. that teaching is the most effective method of

learning). The root of the problem is an image by Edgar Dale’s

depiction of types of learning from the abstract to the concrete. He has no

numbers on his ‘cone of experience’ and regarded it as a visual metaphor

implying no hierarchy at all.

Serious

looking histograms can look scientific, especially when supported by bogus

academic research. They create the illusion of good data. This is one of the

most famous examples of not ’Big’ but ‘Bad’ data in the history of learning.

Lessons from all this? So-called 'futurists' largely just

plunder debris from the past. To be honest, even if Watson did say that misued quote, he would

have been justified, as it was years before computers were to move beyonf mainframes. Interestingly, with the new resurrected Watson, IBM is going back

to a massive computer with a set of algorithms, available on the cloud, to

deliver solutions to a myriad of problems. ‘Five’

may also not be too wide of the mark - a giant server farm for each of Google, Facebook, Apple, Microsoft and Amazon?